Today, we’re discussing ChatGPT prompt engineering for beginners. You’ll quickly learn the most efficient way to get high-quality AI-generated content with three simple elements.

But first, what is prompt engineering for ChatGPT? Prompt engineering refers to how you format content, what you feed to ChatGPT, and what output you want from the AI.

Basic prompt engineering has three main elements: input, instruction, and output.

If you practice in all three areas, ChatGPT will give you the results you want, limiting the time you’ll have to spend editing on the back end before publishing.

Let’s go through three specific examples of how this works.

Table of contents

The Three Elements

What do these three main elements entail?

- Input: the information you feed the prompt

- Instructions: what you want ChatGPT to do with that information

- Output: how you want ChatGPT to format the output

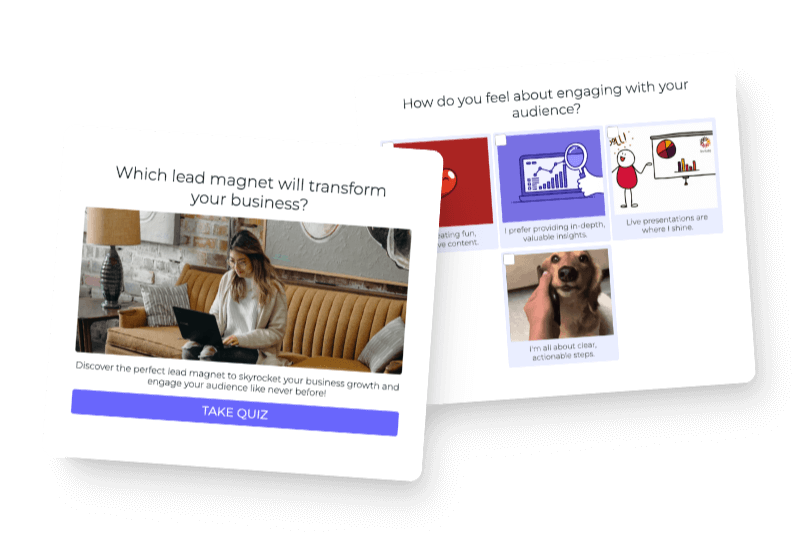

These elements are all for quizzes because that’s our domain of expertise. However, the process is so simple, you can use these elements for just about any type of content.

Training ChatGPT

We’ll start with the most basic prompt and show you how ChatGPT can improve as you train it. ChatGPT is the worst at generating texts. It makes stuff up until it’s been trained with the type and style of content you want in the output.

Here’s a Google Blog with a great graphic of how the training works, explaining how AI takes text, parses it apart, and then converts it into something else.

Today we’ll be asking the AI to create a product recommendation quiz. Let’s go through some examples of different prompts you can enter from least specific to most specific instructions of the output we want.

Use ChatGPT Prompt Engineering for a Product Recommendation Quiz

ChatGPT Prompt Engineering Without Context of the Quiz

Let’s look at making a product recommendation quiz by using an example of another product recommendation quiz. In this example, we are giving Chat GPT no context regarding the actual content of the quiz.

Step 1: instruction:

ChatGPT understands concepts because it’s trained on Wikipedia and research particles. So, for example, when we say “product recommendation engine framework,” it knows what that means because the topic has been written about since 1999. It also knows what “interest-based filtering” is for the same reason. This is all a part of the instruction design—you need to understand what ChatGPT knows and doesn’t know.

Ironically, no one really understands what it knows and doesn’t know. So you have to test it—a lot—meaning you might tell it to “write” in a certain style only to find out it doesn’t know what that means. So then, you might ask it to write in another style and find it executes perfectly.

The only input we gave in this first example was the quiz question: “What type of coffee beans are right for you?”

We asked the platform to make a “What type of coffee beans are right for you?” quiz and to follow a specific quiz format. Check out the format below. You’ll also see we asked ChatGPT to write in the same tone as the example provided.

The quiz format is what we would consider the output:

ChatGPT is pretty useless unless you give it an example to follow. Everything you see online about ChatGPT making up essays and writing content from thin air neglects to mention that the content is generic, boring, and basic.

Other people might have different perspectives, but ChatGPT is a transformer that needs to be trained. It’s not meant to pull information out of thin air, and that’s why there’s so much trouble.

The quiz questions above were written from scratch by our CEO, Josh Haynam—not by any sort of AI. Below is more outline information that was used in the output:

If you don’t give a similar example, you won’t get the results you’re after. ChatGPT will either make stuff up or give you crazy long (or short) results. It can also give you “yes or no” answer choices. It’s all over the place unless you’re specific about what you want.

The output (shown in green) from ChatGPT actually gave us some great results. It gave us a quiz title, a short description, and quiz questions.

However, the content is super generic and basic. The image below reads similarly to a Wikipedia article because ChatGPT is trained on Wikipedia articles.

Next, let’s note an important setting—the temperature—which is on the left-hand side of the screen:

This feature is included in the Open AI Playground and not ChatGPT. ChatGPT is tuned in a particular way that doesn’t work for every case. So, because you can play with the temperature, the links, and numerous other settings, use the Playground to make quizzes if you’re serious about learning prompt design.

Now that we’ve discussed the most generic and basic content, let’s move on to the second most basic version.

Using a Real Quiz as an Example in the Prompt

Almost everything in the second example stayed the same, including the instructions. The only difference was that we fed ChatGPT a specific online store. For today’s purpose, we used Henry’s House of Coffee as an example. They have an awesome quiz on their site that AI did not make, and they have been kind enough to let us test out the AI models on their site.

Side note: Their company and coffee are super cool. Check them out!

We also added more input than in the first example. In this case, we added the name of the coffee house, the URL, and a short one-sentence description (see below).

We didn’t provide an example quiz. We just gave it the format without any text because we used more input than before.

When you start giving ChatGPT more detailed input, you don’t want to give too many examples—as we did in the first generation.

You only want one transformation. When prompt engineering, you want it to go from the website to the coffee quiz. ChatGPT will not do multi-part transformations, so if you’re feeding in a bigger input, do not also feed it a full input example.

The output in the second example, shown above, was much better than in the first example. It’s more personalized, and it uses the brand name. This is what we recommend our customers start with because it’s the fastest way to get something good.

You’ll quickly learn that at the end of the day, you have to run through and humanize the content to ensure your voice is accurate. Even if you’re feeding it a bunch of your own texts to start with, you have to edit the content to get everything right. Otherwise, it will be obvious AI wrote your content.

Take It Further with Your Actual Products

In example three, we gave the most input, which led to the best results. We used the same instructions, but this time, the input included a whole list of products with short descriptions.

You could go even further and write a full paragraph to describe each product. We tested that, and it worked better than just the short descriptions in the image above.

We used the same output format as the previous example, which gave us an even better quiz output.

It was written with the same language that Henry’s House of Coffee uses. It also has really solid outcomes with specific products. This would cut a lot of time spent editing on the back end.

Recap of ChatGPT Prompt Engineering for Beginners

To recap, you have your instructions, your input, and your output.

Your instructions can be tweaked and modified as much or as little as you want. With prompt engineering, there are diminishing returns, where after a certain point, the AI gets confused by too many instructions. You have to walk the line regarding the right amount of instructions to give the inputs.

In the third example, you can go pretty far with giving it a lot of input because the more you give it, the more it has to train on, and the better that transformation will be. Always focus on figuring out the right inputs.

The output format takes a lot of testing for each of these elements. You’ve got to test them to find out what the AI does and doesn’t understand, to ensure the AI knows how to format the content.

We gave very specific instructions for some of the elements. For reference, we included the outputs again below.

This is very much a trial-and-error method because there’s no set functionality of what it does and doesn’t understand. You really just have to be comfortable with testing lots of variations. Once you find something, keep with it—it will work over and over again.

Final Thoughts

A lot of people will tell you that you have to learn a TON to generate quality and brand-specific AI content. However, after playing with ChatGPT for at least 300 hours, and training it on very specific data sets, we found that using these three elements is the most useful.

It all comes down to the input and instructions you use to prompt the AI—and each of those is something you’ll need to nail down. And finally, to get the most effective results, you have to figure out how to format the output—whether you’re using ChatGPT or the Open AI playground. (We recommend you use the Playground.)

You can then codify your content into the API and build fine-tuning models. To do this, though, you first have to navigate the three elements and be happy with the results.

This method of prompt engineering changes the usage of getting value from ChatGPT, instead using the model for something really practical.

Editor’s note: This article was originally a transcript reworked by Sophia Stone, Interact Marketing Intern