Welcome to prompt engineering, part two! Here, we’ll go through some more advanced ways to optimize prompts for consistent and high-quality output. Once you really learn how to work with AI to produce content, it is game-changing.

We’ve been optimizing prompts and getting great results consistently. It’s made a huge difference in how much time we spend editing the AI’s output. If you’re not at this stage yet, learn how to get started with prompt engineering.

Table of contents

Basic Prompt Engineering

Below is a quick summary of basic prompt engineering before we dive in.

There are three main components to any good prompt:

- Input—the information you’re feeding the prompt in ChatGPT (or whatever AI platform you’re using)

- Instruction—what you want ChatGPT to do with that information

- Output—how you want ChatGPT to format the output

ChatGPT—and all other large language models—will fail if you ask them to start from scratch, but they succeed if you give them specific instruction (information) to work with. Your instruction should communicate your goal to ChatGPT. In other words, your instruction should communicate how you want the AI model to format the output it gives you.

Check out part one for more in-depth instructions.

Optimizing Prompts

Let’s go through how to construct each of the three main components of prompt engineering, including how to lay them out and use markup language in your prompts so that the AI can execute.

There’s a lot that goes into this. The way that we’re writing these prompts executes against the AI strength, manipulating one form of content into another form of content within specific parameters. AI needs a “dialed-in” way of manipulating whatever you’re giving it to produce a positive output.

Below is the prompt we’ll go through to show you a few of the main components:

When you get into optimizing prompts, there are three things to key in on:

- Markup language—If you’re familiar with HTML, that’s a markup language. It’s a fancy way of saying that you’re marking up raw text so that it has reference points you can refer back to.

- Concrete Order of Operations—You want to give the AI a linear set of instructions. AI isn’t effective when you ask it to run multiple things simultaneously. It’s best to provide an ordered step-by-step list.

- Specific instructions for the output and style/tone of the text—Give the AI specific instructions on how to format the output, including the tone, style, length, tense, and point of view (first, second, or third person) you want it to write in.

Markup Language

The first thing to highlight in optimizing your prompt engineering is the markup language. You don’t have to follow HTML, but if you know HTML, use it because it’s easy. If you don’t know HTML, you can make your own language, which we actually prefer because, well, it’s more fun to make up your own language.

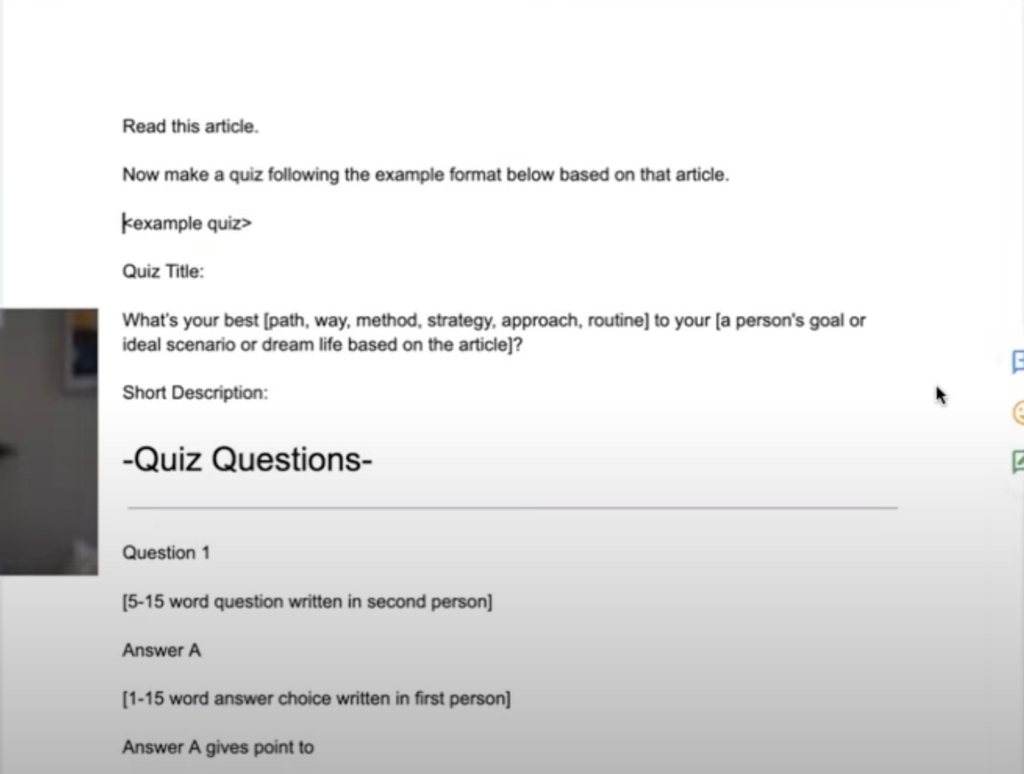

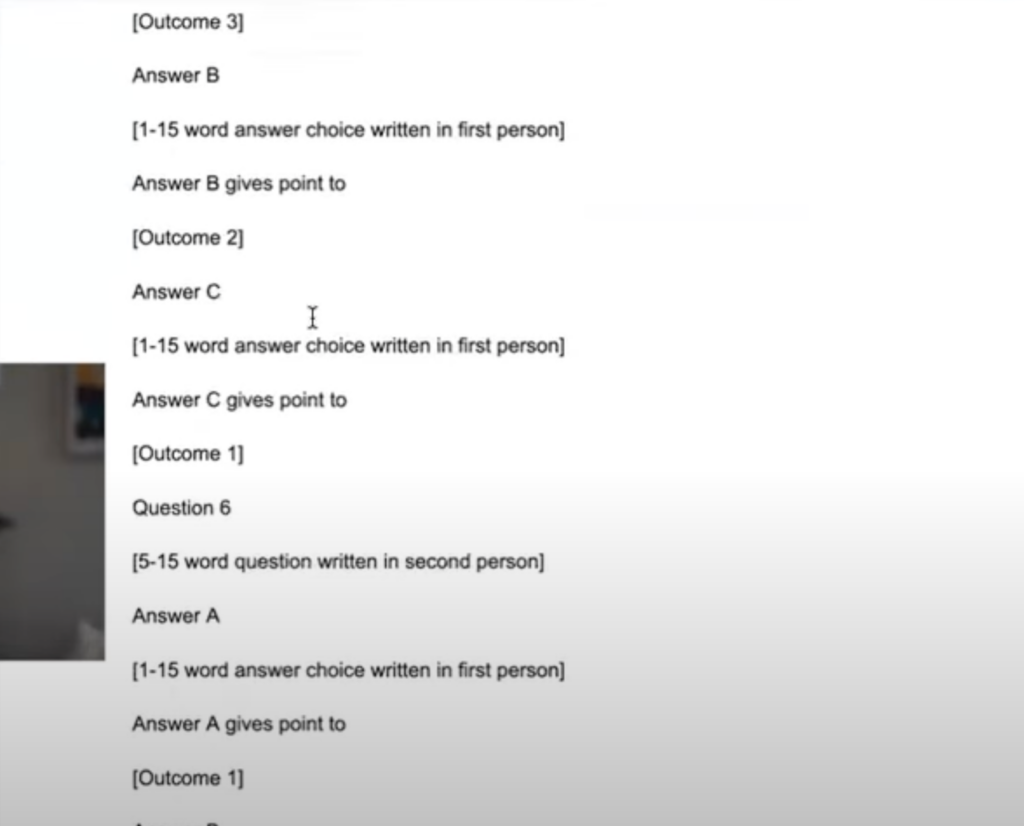

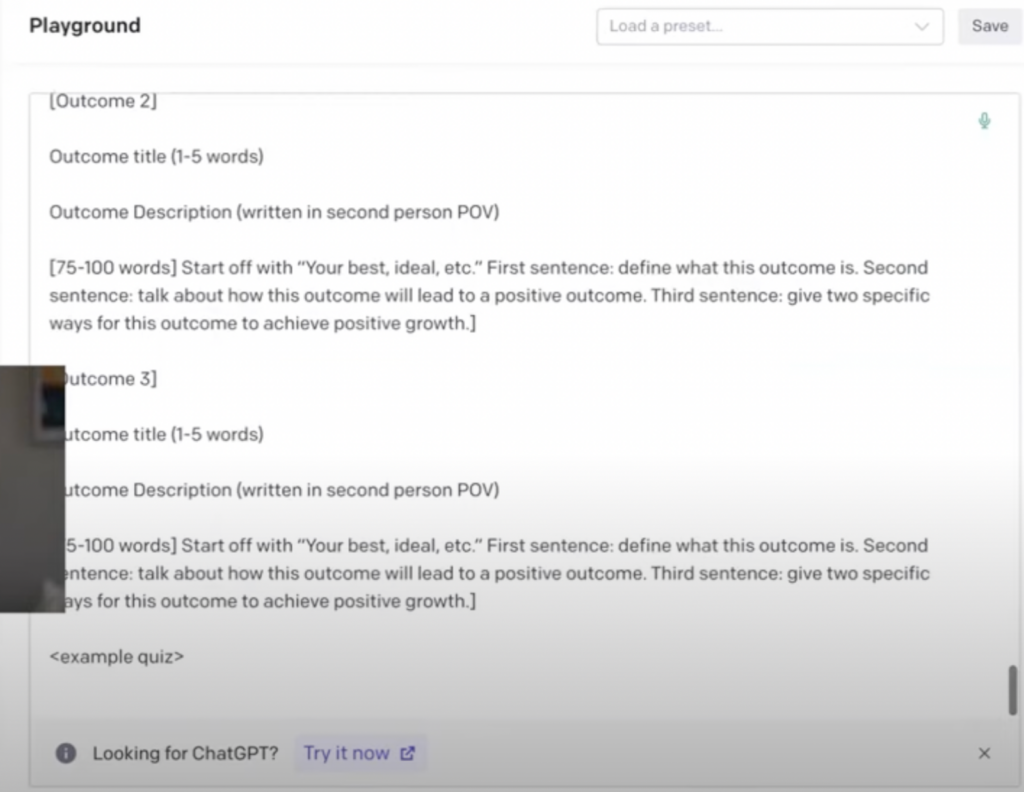

You can see in the image below how these different sections are marked up—specifically, the “example quiz.”

We have brackets around where we’re telling the AI to create answer choices, and I’m using different components.

Now, one thing to note is that we’re making this prompt in a Google doc so we can make the text bigger. In OpenAI, the text modifications will not carry that when copied (so don’t use text size as your markup language).

Markup language is brackets, quotation marks, carrot signs, or whatever you want to use to mark up your text. AI will get confused if you skip this step—it will pull random bits of information from different parts of your prompt and guess where you want to pull information from. It will get very confused unless you markup language for everything.

Markup language is the number one component. If you don’t do anything else, just do that, and your prompts will be much better.

Concrete Order of Operations

The second thing for optimizing your prompt engineering is to give AI a concrete order of operations or build order. If you tell it to do everything simultaneously, it will get confused and give you poor outputs.

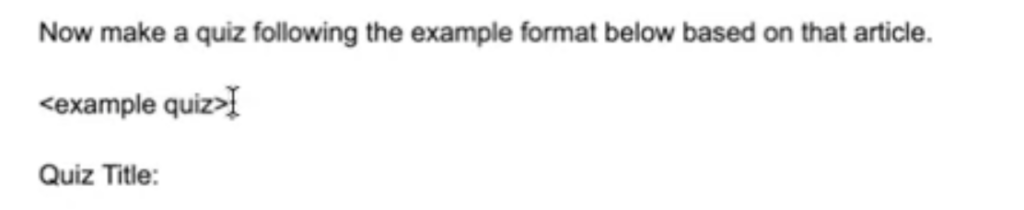

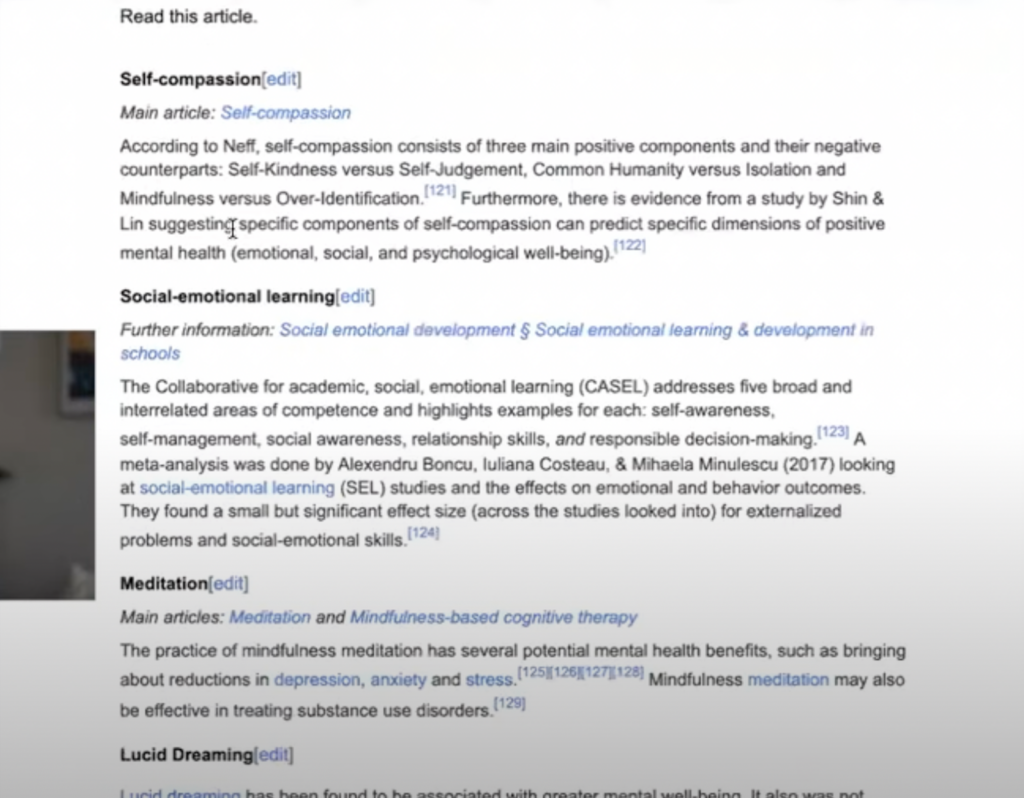

In the example below, I’m instructing the AI to “Read this article.” When you include a specific article for the AI to read, it will pull the information directly from it.

In this case, it’s important to note that the AI will read the article we want it to read before it makes our quiz. If we tell it to do both actions at the same time, it will fall flat on its face. It will come out with some weird stuff that makes no sense.

Going back to the markup language, you have your example quiz in the sectioned-off area:

Then, there’s a closing tag at the end because we really want this to be marked off from the instructions. Make a clear distinction between the output format and the instructions.

Including Tone for Prompt Engineering

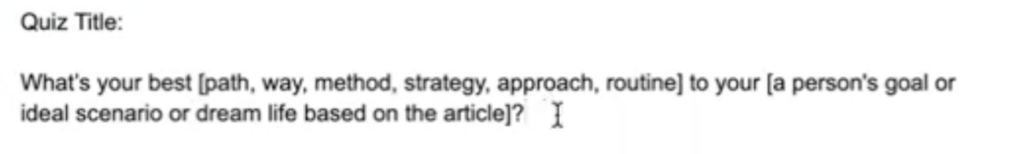

The third important component when optimizing prompts is tone. Let’s take a closer look at the quiz title we are using:

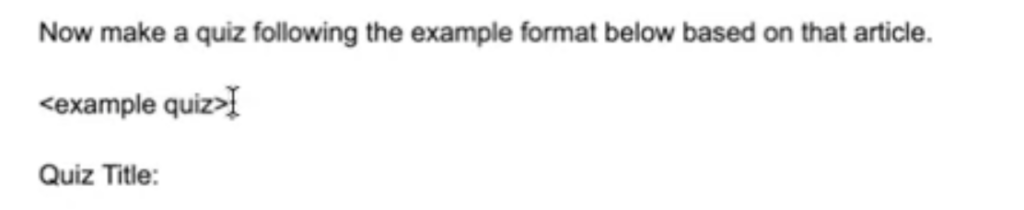

You can see it says, “What’s your best [path, way, method, strategy, approach, routine] to your [a person’s goal or ideal scenario or dream life based on the article]?”

In the first half of the title, we’re telling the AI exactly how we want the quiz title formatted, but it can choose between the words that are separated by a comma. This is typically what you would experience when downloading something like a CSV file—separating using a comma is common markup language.

In the title’s second half, we use “or” in the bracket.

This is to show that you don’t have to be super stringent with markup language. We use commas and then switch to using “or.” Play around with what works for you; you don’t have to follow a specific language. This can be easier because you don’t always have to be so specific.

Later on in this document, we tell AI to write in a specific way:

In the outcome descriptions above, we tell the AI to start with “Your best, ideal, etc.,” which allows it to have a little more creativity. Then, we tell it the first sentence should define the outcome; the second sentence should talk about how this outcome will lead to a positive outcome; and the third sentence should give two specific ways to use this outcome for positive growth. We tell it to write text, but more specifically, how to write the text.

We already fed the AI the article, so it knows where to pull information. In this example, we used a Wikipedia article because the content is open source (otherwise, you will need permission and to cite your source). However, it’s even better to have the AI read something you personally wrote, so that it knows your style.

The next step is to copy the article and paste it directly below the prompt titled “Read this Article.” It should look like this:

Finally, you’ll copy the entire document into the OpenAI playground. You’ll see it gets pretty long as we build it out. We used a temperature of 0.48 in the playground because we wanted the content to be somewhat random but not super random. Click submit once you paste everything into the AI platform.

Quiz Results

OpenAI playground makes a great quiz with prompt engineering. It’s formatted in a way that’s super easy to copy and paste into whatever tool you’re using.

At Interact, this is what we’re doing to build our own tool right now. We want these prompt engineering instructions to be hundreds of thousands of times better, so we’re really getting specific.

Main Takeaways of Prompt Engineering

The main takeaway from learning this optimization process is that you can get extremely specific. The more specific you get, the better. This goes for marking up your text and specifying the tone, length, and point of view you want.

When you do this, the AI will give a beautiful output— every single time.

We hope you find this helpful. We are building an AI tool for creating quizzes, and we’re learning a lot about how to prompt effectively for scaling quizzes.

Editor’s note: This article was originally a transcript reworked by Sophia Stone, Interact Marketing Intern